Ever watched a kids’ movie where the animated human characters are realistic but just not quite right and maybe a bit creepy? Then you’ve probably been a visitor to what’s called the “uncanny valley.” The uncanny valley effect was poorly understood, but new MRI brain scans help explain why we feel repulsed when confronted with fake humans that appear too human.

People respond positively to characters that share some characteristics with humans – dolls, cartoon animals, or robots like R2D2. As the agent becomes more human-like, it becomes more likeable. But at some point that upward trajectory stops and instead the agent is perceived as strange and disconcerting. Many viewers, for example, find the characters in the animated film Polar Express to be off-putting. And most modern androids are also thought to fall into the uncanny valley.

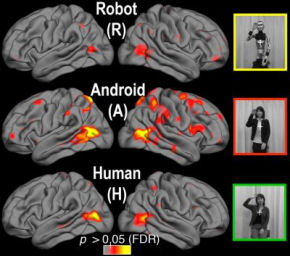

To explore why this effect occurs, Ayse Pinar Saygin, of the University of California, San Diego, took a peek inside the brains of people viewing videos of a human-looking android, a human and a robot-looking robot. Her findings, appearing in the journal Social Cognitive and Affective Neuroscience, suggest that the effect is due to a perceptual mismatch between appearance and motion.

Saygin and her colleagues set out to discover if what they call the “action perception system” in the human brain is tuned more to human appearance or human motion, with the general goal of identifying the functional properties of brain systems that allow us to understand others’ body movements and actions.

The subjects were shown 12 videos of the human-looking android Repliee Q2 performing such ordinary actions as waving, nodding, taking a drink of water and picking up a piece of paper from a table. They were also shown videos of the same actions performed by the human on whom the android was modelled and by a stripped version of the android (skinned to its underlying metal joints and wiring, revealing its mechanics).

The researchers say they saw, in essence, evidence of a mismatch. The brain lit up when the human-like appearance of the android and its robotic motion “didn’t compute.”

“The brain doesn’t seem tuned to care about either biological appearance or biological motion per se,” said Saygin. “What it seems to be doing is looking for its expectations to be met – for appearance and motion to be congruent.”

In other words, if it looks human and moves like a human, we are OK with that. If it looks like a robot and acts like a robot, we are OK with that, too; our brains have no difficulty processing the information. The trouble arises when – contrary to a lifetime of expectations – appearance and motion are at odds.

“As human-like artificial agents become more commonplace, perhaps our perceptual systems will be re-tuned to accommodate these new social partners,” Saygin concluded. “Or perhaps, we will decide it is not a good idea to make them so closely in our image after all.”

Related:

Uncanny valley response observed in monkeys

Have You Hugged Your Robot Today?

Robots taught to deceive

Novel Robot Will Be “Dynamic And Graceful,” Says Prof

Comments are closed.