Writing about their findings in the journal PLoS ONE, Italian researchers say that male homosexuality in humans can be explained by a model based on sexually antagonistic selection, where genetic factors spread in the population by giving a reproductive advantage to one sex while disadvantaging the other.

It’s generally believed that male homosexuality is influenced by psycho-social factors as well as a genetic component. But male homosexuality is difficult to explain under Darwinian evolutionary models as carriers of genes predisposing towards male homosexuality would be likely to reproduce less than average, suggesting that homosexuality should progressively disappear from a population.

Now, however, Andrea Camperio Ciani and Giovanni Zanzotto at the University of Padova and Paolo Cermelli at the University of Turin say that sexually antagonistic selection can explain the persistence of male homosexuality. Their work is based on a previous study that found that females in the maternal line of male homosexuals were more fertile than average.

The newly developed model shows that the interaction of male homosexuality with increased female fecundity within human populations is a complex dynamic, resulting in the maintenance of male homosexuality at stable and relatively low frequencies. The researchers say that their findings invite a shift in thinking away from homosexuality being viewed as a detrimental trait (evolutionarily speaking), instead putting it within the wider evolutionary framework of a characteristic with gender-specific benefits, which promotes female fecundity.

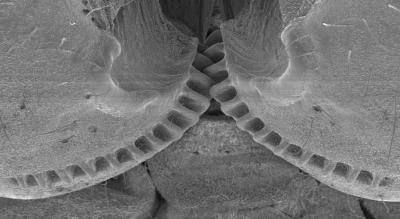

Delivering a fecundity benefit to one sex while disadvantaging the other is a key mechanism through which high levels of genetic variation are maintained in biological populations, say the researchers. They contend that male homosexuality is just the first example of an unknown number of sexually antagonistic traits, which contribute to the maintenance of the natural genetic variability of humans.

An unexpected implication of this hypothesis concerns the impact that the sexually antagonistic genetic factors for male homosexuality have on the overall fecundity of a population. The findings suggest that the proportion of male homosexuals may signal a corresponding proportion of females with higher fecundity. Fascinatingly, these factors always contribute – all else being equal – to a positive net increase of the fecundity of the whole population. This increase grows as the population baseline fecundity decreases; meaning that the genes influencing male homosexuality end up playing the role of a “buffer” on any external factors lowering the overall fecundity of the whole population.

Related:

For Sheep, Homosexuality Is In The Genes

Homo Superior

Switching Gayness On And Off

Evolutionary Risk Distribution Law Identified

Comments are closed.