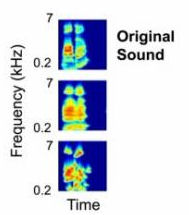

Eavesdropping on the brain’s internal monologs or communicating with locked-in patients may one day be a reality, as scientists learn how to decode the brain’s electrical activity into audio signals. Reported in PLoS Biology, the technique reads electrical activity in a region of the human auditory system called the superior temporal gyrus (STG). By analyzing the patterns of STG activity, the researchers were able to reconstruct words that the subjects listened to in normal conversation.

“This is huge for patients who have damage to their speech mechanisms because of a stroke or Lou Gehrig’s disease and can’t speak,” said study co-author Robert Knight, a University of California Berkeley professor of neuroscience. “If you could eventually reconstruct imagined conversations from brain activity, thousands of people could benefit.”

That may be some way off, however, as the current decoding capability is based on the sounds a person actually hears. To use it for reconstructing internal conversations, these principles would have to apply to someone’s internal verbalizations. But co-researcher Brian N. Pasley says the technique is valid. “Hearing the sound and imagining the sound activate similar areas of the brain. If you can understand the relationship well enough between the brain recordings and sound, you could either synthesize the actual sound a person is thinking, or just write out the words with a type of interface device.”

“We think we would be more accurate with an hour of listening and recording and then repeating the word many times,” Pasley said. But because any realistic device would need to accurately identify words the first time heard, he decided to test the models using only a single trial.

“I didn’t think it could possibly work, but Brian did it,” Knight said. “His computational model can reproduce the sound the patient heard and you can actually recognize the word, although not at a perfect level.”

The ultimate goal of the study was to explore how the human brain encodes speech and determine which aspects of speech are most important for understanding. “At some point, the brain has to extract away all that auditory information and just map it onto a word, since we can understand speech and words regardless of how they sound,” Pasley explained. “The big question is, what is the most meaningful unit of speech? A syllable, a phone, a phoneme? We can test these hypotheses using the data we get from these recordings.”

Related:

Discuss this article in our forum

Podcast of the researchers explaining their work

Videos reconstructed from brain scan

MRI scans predict pop music success

Implant enables direct alphanumeric input from brain to computer

Comments are closed.