24 October 2013

Researchers expose Google's massive network expansion

by Will Parker

Google has dramatically increased the number of sites around the world from which it serves client queries, say researchers who accidentally discovered a massive repurposing of existing infrastructure to change the way that Google processes web searches.

The researchers, from the University of Southern California, say they had not originally set out to document search giant's rapid growth. "We had developed techniques to locate the servers, without requiring access to the users they serve, and it just so happened we exposed this rapid expansion," Xun Fan explained.

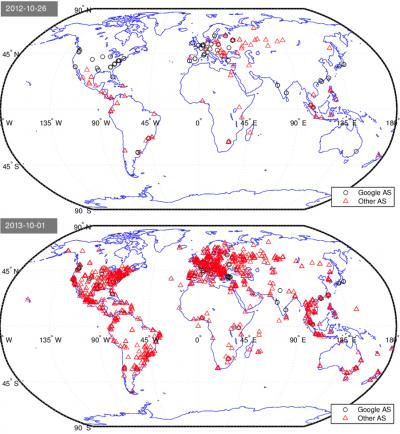

The changes over the last 10 months were revealed using a new method of tracking down and mapping servers, identifying both when they are in the same datacenter and estimating where that datacenter is. Fan says that from October 2012 to late July 2013, the number of locations serving Google's search infrastructure increased from a little less than 200 to a little more than 1,400.

The researchers say that mush of this expansion reflects Google utilizing client networks (such as Time Warner Cable, for example) that it already relied on for hosting content like videos on YouTube, and reusing them to relay - and speed up - user requests and responses for search.

"Google already delivered YouTube videos from within these client networks," said Matt Calder, lead author of a study that documents the changes. "But they've abruptly expanded the way they use the networks, turning their content-hosting infrastructure into a search infrastructure as well."

Previously, according to Calder, if you submitted a search request to Google, your request would go directly to a Google datacenter. Now, he says your search request will first go to the regional network, which relays it to the Google datacenter. While this would appear to make the search take longer, the process actually speeds it up.

This is because data connections typically need to "warm up" to get to their top speed - the continuous connection between the client network and the Google datacenter eliminates some of that warming up lag time. In addition, the occasional loss of data packets is dealt with more effectively by designating the client network as a "middleman," so lost packets can be spotted and replaced much more quickly.

Google's rapid expansion, claims the study, tackles major causes of slow transfers head-on. The strategy appears to have benefits for web users, ISPs and Google, say the team. Users have a better web browsing experience, ISPs lower their operational costs by keeping more traffic local, and Google is able to deliver its content to web users more quickly.

The study was presented at the SIGCOMM Internet Measurement Conference in Spain this week.

Related:

Discuss this article in our forum

Error tolerance could slash computer energy use

No power source needed for new wireless communications technique

Kinect-like gesture recognition leveraged from standard WiFi signals

Holographic video transmitted via Ethernet