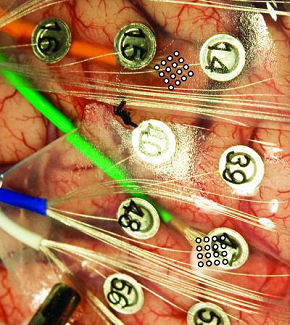

In an early step toward letting severely paralyzed people speak with their thoughts, researchers have translated brain signals into words using two grids of 16 microelectrodes implanted beneath the skull but atop the brain.

“We have been able to decode spoken words using only signals from the brain with a device that has promise for long-term use in paralyzed patients who cannot speak,” said the Univeristy of Utah’s Bradley Greger, who details his work in the Journal of Neural Engineering.

Greger’s subject was an epileptic man who already had a craniotomy (temporary partial skull removal) so that the experimental microelectrodes could be placed directly onto the surface of his brain. Greger’s team then recorded brain signals as the patient repeatedly read each of 10 words that might be useful to a paralyzed person: yes, no, hot, cold, hungry, thirsty, hello, goodbye, more and less.

Later, the team tried figuring out which brain signals represented each of the 10 words. When they compared any two brain signals – such as those generated when the man said the words “yes” and “no” – they were able to distinguish brain signals for each word 76 percent to 90 percent of the time.

“This is proof of concept,” Greger says, “We’ve proven these signals can tell you what the person is saying well above chance. But we need to be able to do more words with more accuracy before it is something a patient really might find useful.”

Greger used a new kind of non-penetrating microelectrode that sits on the brain without poking into it. These electrodes are known as microECoGs because they are a small version of the much larger penetrating electrodes used for electrocorticography, or ECoG, developed a half century ago.

Because the microelectrodes do not penetrate brain matter, they are considered safe to place on speech areas of the brain – something that cannot be done with penetrating electrodes that have been used in experimental devices to help paralyzed people control a computer cursor or an artificial arm.

Greger used the microelectrodes to detect weak electrical signals from the brain generated by a few thousand neurons. Each of two grids with 16 microECoGs spaced 1 millimeter (about one-25th of an inch) apart, was placed over one of two speech areas of the brain: First, the facial motor cortex, which controls movements of the mouth, lips, tongue and face – basically the muscles involved in speaking. Second, Wernicke’s area, a little understood part of the human brain tied to language comprehension and understanding.

The researchers found that each spoken word produced varying brain signals, and thus the pattern of electrodes that most accurately identified each word varied from word to word. They say that supports the theory that closely spaced microelectrodes can capture signals from single, column-shaped processing units of neurons in the brain.

The researchers were most accurate – 85 percent – in distinguishing brain signals for one word from those for another when they used signals recorded from the facial motor cortex. They were less accurate – 76 percent – when using signals from Wernicke’s area. Interestingly, combining data from both areas didn’t improve accuracy, showing that brain signals from Wernicke’s area don’t add much to those from the facial motor cortex.

When the scientists selected the five microelectrodes on each 16-electrode grid that were most accurate in decoding brain signals from the facial motor cortex, their accuracy in distinguishing one of two words from the other rose to almost 90 percent. In the more difficult test of distinguishing brain signals for one word from signals for the other nine words, the researchers initially were accurate 28 percent of the time. However, when they focused on signals from the five most accurate electrodes, they identified the correct word almost half (48 percent) of the time.

“It doesn’t mean the problem is completely solved and we can all go home,” Greger says. “It means it works, and we now need to refine it so that people with locked-in syndrome could really communicate. The obvious next step is to do it with bigger microelectrode grids. We can make the grid bigger, have more electrodes and get a tremendous amount of data out of the brain, which probably means more words and better accuracy.”

Related:

Neural implant “melts” onto brain

Researchers probe brain’s communication infrastructure

Direct Brain Control Of Robot Demonstrated

Letting The Brain Out Of The Box

Comments are closed.